When manufacturers build TVs, monitors, and other displays, they advertise a certain resolution. You’ve probably seen a lot of terms like 480p, 720p, 1080p, and 4K. What do these numbers mean, and what is each type of display best for?

In this guide, we’ll discuss the benefits and drawbacks of each different screen resolution. We’ll also talk about what resolution is, what the “p” means, and whether 8K TVs are worth the investment. Are you ready to take a deep dive into screen resolutions? Let’s take a closer look!

Video Resolution Basics

Before we talk about different resolutions, we have to understand what resolution means. A screen’s resolution describes the number of pixels that can be displayed. The more pixels on the screen, the higher the resolution.

Pixels are the individual dots that make up your image. Each pixel can display a wide range of colors. By changing the color of individual pixels, the image changes. With more pixels, you can get a sharper image. You can zoom in closer and see more details.

Imagine you’re building a pyramid, and you want the sides as smooth as possible. If you build it with giant rectangular blocks, the edges will be all jagged. Build it out of smaller bricks, and the edges will be less jagged. Use sand and cement to make a mortar, and you can make a smooth surface. The smaller the individual building blocks, the smoother your pyramid.

For digital displays, we measure resolution with a set of two numbers. The first is the number of pixels in a row, and the second is the number of pixels in a column. For example, a screen that’s 1,920 pixels wide and 1,080 pixels high has a resolution of 1,920 x 1,080. Most screens these days have a standard 16:9 aspect ratio. For those screens, we can simply use the second number. So a 1,920 x 1,080-pixel monitor has a resolution of 1080p.

Common Video Resolutions

There are many video resolutions, not all of which are standard. For this guide, we’re going to focus on the resolutions most commonly found on consumer electronics. These are 480p, 720p, 1080p, and 4K. Here’s a closer look at each one, what it’s good for, and when it should be avoided.

480p

480p is the oddball on our list, since it doesn’t have a 16:9 aspect ratio. Instead, it has a more old-fashioned 4:3 aspect ratio. This is the upper threshold for what we now call standard definition, or SD.

480p used to be a great picture quality… back in the early 2000s. It remained useful for several years if all you wanted was a workmanlike TV for watching the local news. Nowadays, you’ll have a hard time finding a screen in this resolution. Maybe you’ll see it on some low-end cell phones, but that’s about it.

720p

While 480p represents the high water mark of SD technology, 720p is the bare minimum for an HD display. 720p screens have an aspect ratio of 16:9, with 1,280 x 720 pixels. You won’t find a lot of 720p TVs these days, but they remain a viable technology for a few reasons.

For one thing, on a very small screen, you might not be able to tell the difference. Once the pixels are too small to see individually, a higher resolution becomes irrelevant.

For another thing, a lot of streaming services still stream in 720p for bandwidth purposes. This includes even the biggest players like Netflix and HBO Max. Both services technically provide 1080p video. But at times of high traffic, they’re forced to draw down to 720p. If you’re watching Netflix during prime time, there’s a good chance your neighborhood’s internet trunk line is at peak capacity. At that point, Netflix has two choices. They can reduce the resolution, or they can keep interrupting your video with buffering screens. Guess what they choose?

If you’re looking for a good example of relevant 720p technology, check out the Asakuki Avigator 410W. It’s a projector with a bright, vibrant display that supports 1080p inputs. Our only complaint was the weak speakers.

1080p

1080p displays have a resolution of 1,920 x 1080 pixels, and are commonly referred to as “full HD.” This is still the most common resolution, and is compatible with the widest variety of devices. For example, modern game consoles will output 1080p video, but not 720p. If your 720p TV doesn’t support a 1080p input, you won’t be able to use the console.

1080p is the current standard for broadcast TV, cable TV, and streaming services. While everybody loves the idea of higher resolution, you start to run into bandwidth limitations. Broadcasting TV at better-than-1080p resolution would require more bandwidth or yet-to-be-invented technology.

If a 1080p display sounds good to you, consider the AUZAI Computer Monitor. It’s a versatile monitor with a built-in blue light filter that’s great for videos and office work. That said, the design is less than ideal for gaming.

4K

4K is actually a bit of a misnomer. It doesn’t have 4,000 rows of pixels; it has a resolution of 3,840 x 2,160. It’s called “4K” because it has four times as many pixels as a 1080p TV. Put another way, you could fit four 1080p videos on a 4K display without any loss in picture quality.

4K TVs aren’t necessary if all you’re doing is watching television. That said, Blu-ray discs can provide 4K video, which is great for enjoying your favorite movies. Moreover, today’s game consoles and gaming PCs are all capable of 4K resolution. If you’re a gamer, your next screen really should be 4K.

If 4K sounds more your speed, take a look at the Anker Nebula Cosmos Max. It has a native resolution of 4K, which is pretty impressive for a projector. The Android operating system supports more than 5,000 video apps, and it features automatic focus and keystone adjustment. It’s expensive, though, and it only works when you’re connected to the web.

What Does the “p” mean in Screen Resolutions?

Early HD TVs used a technology called interlacing. Instead of a video frame being shown all at once, it would be shown in two stages. First the odd-numbered rows of pixels would appear, followed by the even-numbered ones. This technology allowed broadcasters to provide higher resolutions at lower bandwidths. By sending each frame in two stages, they effectively doubled their bandwidth. TVs would indicate that they used interlacing technology by putting an “i” after the resolution.

The downside of interlacing is that it reduces the image quality. You don’t see flashing or flickering. The interlacing happens 60 times per second, far too fast for you to consciously notice it. But your brain notices it, and it can make videos look janky.

With better technology, broadcasters could send entire frames all at once. This method is called “progressive scanning.” Because the pixels are being replaced smoothly, videos look more fluid and natural. Manufacturers started using “p” to indicate that their displays were better than old interlaced ones. Nowadays, almost all HD displays use progressive scan technology.

What About 8K TVs?

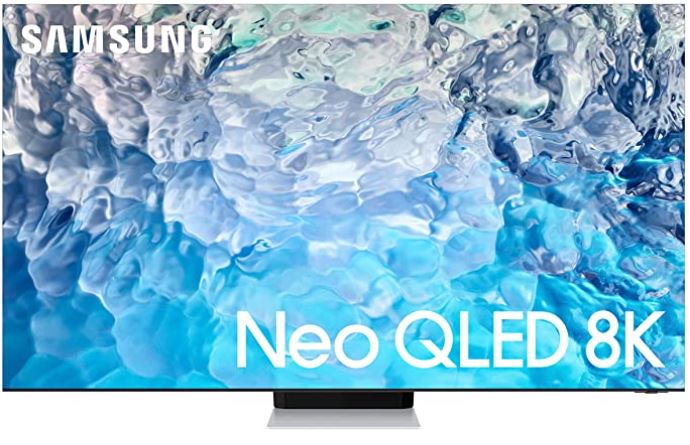

8K displays represent the bleeding edge of display technology. These screens have four times the resolution of 4K, or 16 times the resolution of 1080p. The total resolution is 7,680 x 4,320. This is insane video quality, but it’s probably not worth buying an 8K TV quite yet.

To begin with, nobody is making 8K content. The Xbox Series X and PlayStation 5 claim to be 8K-capable. But in practice, they can only reach that level of quality by making other sacrifices in game quality. You’re not likely to see more than a few 8K titles, even over the entire console generation.

For another thing, you’d need a very large screen to be able to tell the difference between 4K and 8K. We’re talking about a screen with 33,177,600 pixels. An 8K jumbotron would make sense. But even if your TV is wall-sized, you’ll be hard-pressed to notice an improvement in quality.

Final Thoughts

As you can see, each screen resolution has its own benefits. Buy 720p if you want a barebones display that gets the job done. Buy 1080p if you’re primarily interested in streaming or cable TV. If you’re a gamer or Blu-ray enthusiast, 4K is the way to go. For the time being, 8K is just overkill.

Meet Ry, “TechGuru,” a 36-year-old technology enthusiast with a deep passion for tech innovations. With extensive experience, he specializes in gaming hardware and software, and has expertise in gadgets, custom PCs, and audio.

Besides writing about tech and reviewing new products, he enjoys traveling, hiking, and photography. Committed to keeping up with the latest industry trends, he aims to guide readers in making informed tech decisions.