Refresh rate and frame rate are two terms that are commonly used interchangeably. Indeed, when you’re shopping for a monitor or display, it can be virtually impossible to tell the difference. As a matter of fact, we’ve used the terms interchangeably many times. And in common parlance, there’s not much of a difference. Both terms talk about the same thing: how many images can be displayed on the screen in a single second. For example, a 60 FPS video and 60Hz video both display 60 images per second. But there are actually important differences between the two terms.

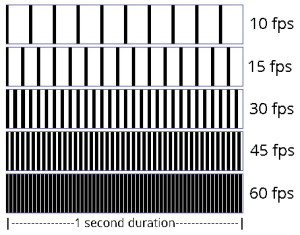

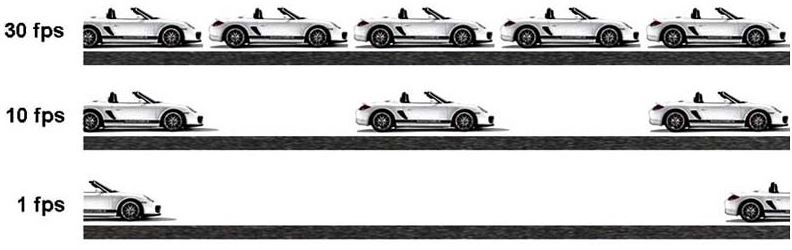

The confusion comes from the nature of video itself. As you probably know, a video consists of several still images that are shown in rapid succession. When the images are shown this quickly, they create the illusion of motion. The more images are shown per second, the smoother and more lifelike the video is. For example, if you watch an old silent movie, it looks choppy and robotic. This is because camera technology at the time only allowed for a standard of 12 frames per second. As technology advanced, Hollywood moved to a standard of 24 frames per second. Modern DVD standards now use 30 frames per second. And some movies have even moved to 60 frames per second. Video games are typically variable, and will run at the fastest rate allowed by your video card.

So, what’s the difference between refresh rate and frame rate? In brief, it comes down to the type of signal. Refresh rate refers to video signals, like HDMI, DVI, or VGA. Frame rate, on the other hand, refers to pre-recorded video, such as a YouTube video or a DVD. Why does this matter? Let’s take a closer look!

Refresh Rate Basics

The term “refresh rate” actually came into use after “frames per second”. While “frames per second” has been used since the earliest days of film, refresh rate originated with television. Earlier televisions used a cathode ray tube (CRT) design. This design consists of three primary parts. An electron gun shoots a beam of electrons through a glass tube onto a phosphor screen. When electrons strike the screen, individual pixels will light up in a particular color, creating an image. The electron gun moves incredibly fast, creating several images in each second. Each time the electron gun creates a new image, the screen is said to have “refreshed”. The number of times the image refreshes each second is called the “refresh rate”, and is measured in hertz (Hz).

CRT technology was the best technology available at the time, but it had a couple of distinct disadvantages. First off, the technology was only suitable for relatively large pixels. This placed a limitation on screen definition. Secondly, when the electron gun gets to the bottom of the screen, it needs to return to the top. During this return process, there’s a brief cause when the screen goes blank. This is not noticeable when the screen has a high refresh rate of 60Hz or more. However, at low refresh rates, it can produce noticeable flicker.

Thankfully, we have better technology these days. LCD screens, for example, are a significant upgrade over CRT displays. LCD pixels each work independently, reconfiguring their liquid crystal structure many times per second. As a result, there’s no flicker or blanking regardless of the video signal’s refresh rate. The screen is always on. Pretty cool!

So, what does all this have to do with frame rate? To understand, we’ll first need to talk about the way video is actually encoded on a digital medium. Let’s take a closer look, and explain how all this works.

Frame Rate and Video Encoding

All digital video needs to be encoded to be useful. Why? Let’s take a quick look at 1080p video. With 1080p, you’re looking at a resolution of 1920 x 1080 pixels. You’re also looking at 60 frames per second, and 24 bits per pixel. So, 1920 x 1080 x 60 x 24 bits per second, or 356 megabytes per second. Ouch! Even the fastest internet connection on Earth can’t handle that rate of transfer. Even your Blu-Ray drive can’t handle it. Moreover, it’s impractical, even for physical media like a disc or SSD. At that rate, a single minute of uncompressed video would take up 21 gigabytes. That works out to 2,520 gigabytes, or almost 2.5 terabytes, for a 2-hour movie!

The solution to this problem is compression. Different encoding protocols compress data in different methods. One method is simply to reduce the color space. For instance, changing from a RGB24 color space to YUV420 will reduce the bits per pixel from 24 to 12.

Different encoding methods use different techniques, but there’s one popular tool they have in common. Specifically, many frames don’t have much difference between them. Take your average documentary. We binge watched the popular Netflix documentary Jeffrey Epstein: Filthy Rich over the weekend. Much of the show consists of people being interviewed. The interviewee’s face is moving, their body is moving, but the background is just sitting still. A compression algorithm will simply flag those static areas, to tell the decoder the pixels remain the same. This can significantly reduce the required bandwidth. In effect, the frame rate can be very high, but much of the screen won’t need to refresh at all.

Are More Frames Always Better?

So, is it always better to have more frames per second? It depends on what you’re doing. For pre-recorded video, not necessarily. Movies and TV shows, for example, are typically recorded with a particular frame rate in mind. Peter Jackson’s The Hobbit trilogy was actually criticized for having too high of a frame rate! Many audiences, including critics, found it to be nauseating and disorienting. The increased frame rate was realistic, but it was too realistic. It broke down the “fourth wall”, the unspoken barrier between the screen and the audience. As a result, it actually kept reminding people that they were watching a movie. For many, this broke the suspension of disbelief, because they were focused more on the screen than on the story.

On the other hand, many sporting events are broadcast at high frame rates. The reason for this is to reduce motion blur. When you’re watching a game of football or baseball, you’re watching high-speed, real-time action. You need to see where the ball is, what the quarterback is doing, and so on. At a low frame rate, this can turn into a confusing, blurry mess. And a display with a high refresh rate will not help if the source frame rate is low.

Higher frame rates are also essential for gaming. Because most game content is dynamically generated, a low frame rate can lead to tons of blur. It can even lead to “tearing”, which means that moving objects create streaks across the screen. The higher the frame rate, the more accurately these images will render.

Low frame rates, on the other hand, can be beneficial for several reasons. One of the most obvious is in media with no motion. For example, a presentation with a series of still slides. In this case, a high frame rate would simply waste disk space. Another example is for video capture cards. In this case, you may have a high-FPS input. But your capture card might record video at a lower frame rate. The issue here is the speed of the capture card. Most capture cards simply can’t handle ultra-high frame rates, so they simplify things. The benefit to you is that by doing this, they can keep up with the incoming signal.

Final Verdict

As you can see, refresh rate and frame rate are similar, but not identical. On the one hand, both terms refer to the number of frames displayed per second. However, they’re talking about fundamentally different things. Frame rate referred originally to the number of images per second in a film strip. As technology advanced, it also came to refer to the number of images per second in raw video signals. Importantly, this number of images can vary based on settings, capture devices, and other factors.

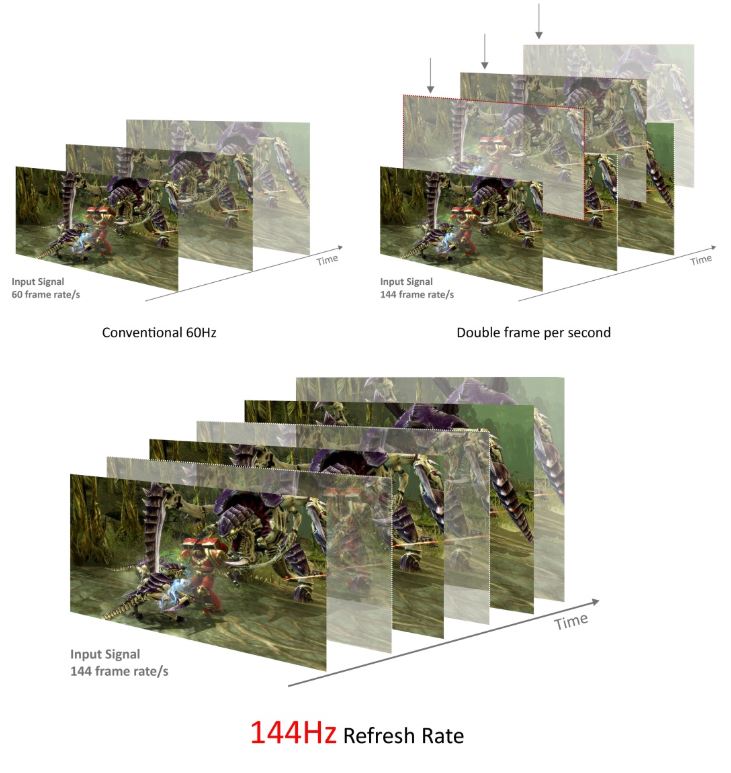

On the other hand, refresh rate refers primarily to hardware, not to the video source. Refresh rate describes the number of times per second that a hardware device can change images. So, for example, if you have a 120Hz display and a 60 FPS video source. Your screen will refresh 120 times per second, but will only display 60 frames per second. That’s pretty much it!

Meet Ry, “TechGuru,” a 36-year-old technology enthusiast with a deep passion for tech innovations. With extensive experience, he specializes in gaming hardware and software, and has expertise in gadgets, custom PCs, and audio.

Besides writing about tech and reviewing new products, he enjoys traveling, hiking, and photography. Committed to keeping up with the latest industry trends, he aims to guide readers in making informed tech decisions.